Technology Assisted Review, or TAR as it’s more widely known, is a confusing topic for anyone in the eDiscovery world, but I fear that it’s most confusing for those at the law firm or in-house counsel office who are trying to explain it to the court, opposing counsel or to their own teams.

What is TAR and why should you care?

TAR is just what the acronym stands for – a review workflow aided by the use of various technologies employed at various times during the process. It is not AI, it is not science fiction, it’s not always predictive coding and it’s not as difficult as we are all making it.

Have you ever watched a TV series on Netflix and then when you logged in next, noticed the list of suggestions for other TV shows you’d like to watch? Think of that as TATV (technology assisted TV). There are certain data points that exist in the hosting of that TV series (name, type, actors, director, date, viewer rating) that the Netflix suggestion engine uses to recommend other similar TV series that you may like based on what you’ve already selected.

Have you ever ran a search for a restaurant on Open Table and booked a reservation? Did you notice the next time you logged in there was a list of suggested restaurants you may like? Think of that as TAD (technology assisted dining). There are certain data points there too that the Open Table algorithm tracks to recommend other restaurants – in the area, with similar star ratings, of the same food type, etc.

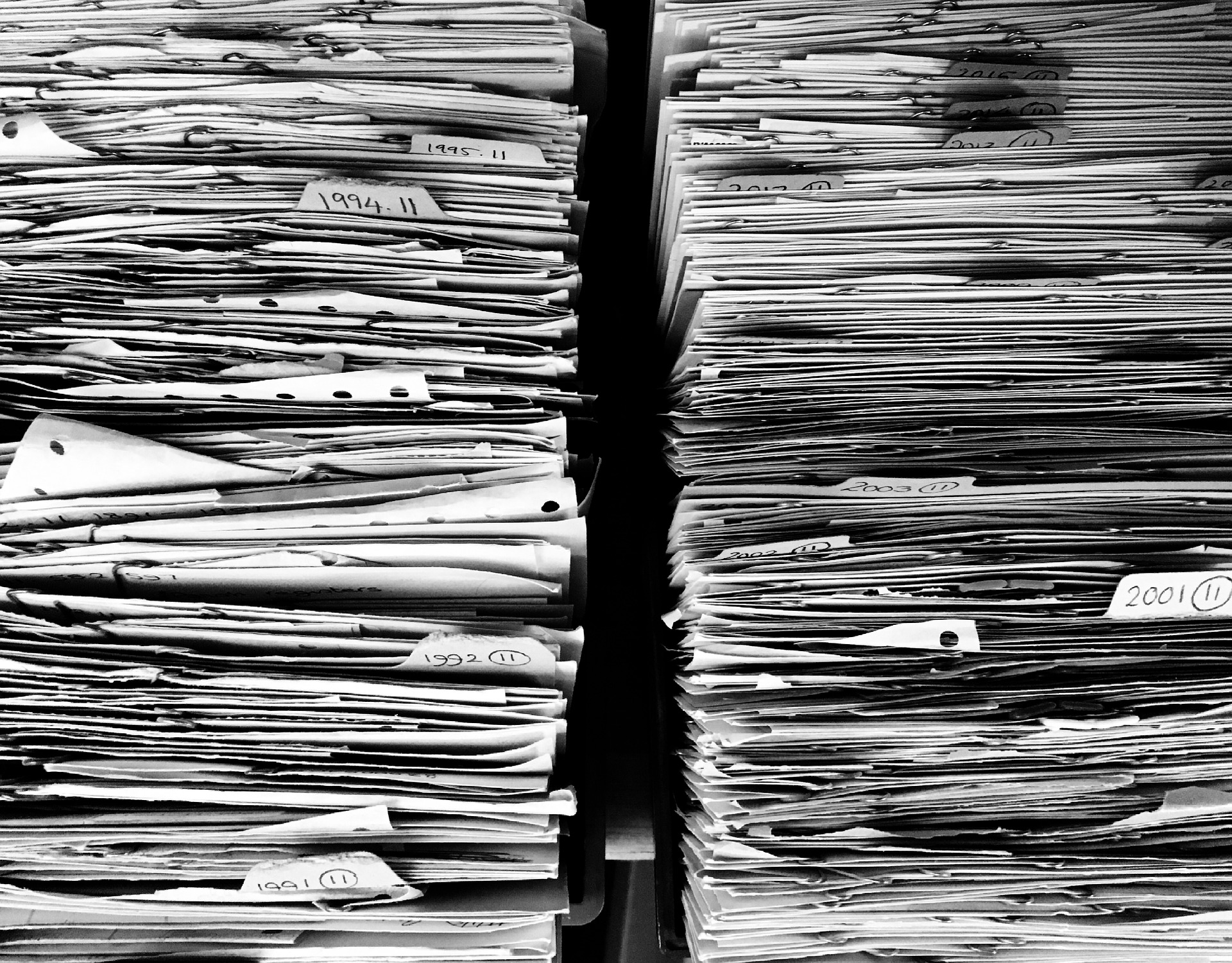

TAR is just a way to help us navigate through the thousands, tens of thousands or millions of documents that need to be reviewed in investigations and litigations. Reading thousands, or millions, of documents is tedious, time consuming and costly and there are all sorts of technologies that have hit the legal market in the last decade to make that reading (review) easier, faster and less expensive.

TAR is just a way to help us navigate through the thousands, tens of thousands or millions of documents that need to be reviewed in investigations and litigations. Reading thousands, or millions, of documents is tedious, time consuming and costly and there are all sorts of technologies that have hit the legal market in the last decade to make that reading (review) easier, faster and less expensive.

I’d like to change the current conversation around TAR. Let’s stop asking IF you should use TAR and let’s start asking HOW you should use it. The IF question is tired and the technology has been out there long enough, with enough key examples of success, that it’s an obsolete question. So, how should you use TAR?

Here are a few quick ideas for impact ways to incorporate TAR into your next review.

- Run thread grouping on your email collections so you can read emails in context and can quickly review entire conversations rather than individual emails.

- Run near duplicate detention and group those very textually similar documents together as well (usually 90% similarity or above). With all the various versions of marketing, Sales and contract drafts, isn’t it smarter to review all versions of the same document together? That way you can make faster, more consistent decisions.

- On received productions, run dynamic clustering and see what types of groupings exist. Clustering’s grouping of similar documents can allow you to spot review large sets of conceptually similar documents and either mass tag them for further review or mass tag them for zero review. This can cut your review time in half before you even begin officially reviewing.

- Also on received productions, after you use clustering to cut your population in half, run some searches to issue tag documents then let the system issue code the rest based on your decisions on the first set. The tags won’t be perfect, but they’ll be pretty close and you can refine them as you go. Plus with a continuous active learning (CAL) system, the population will consistently get updated with your refinements.

A little more complex (and I stress the word little), Predictive Coding tools should also be included in this question of how.

Predictive Coding doesn’t have to be scary or the beginning of an argument. Personally, I think if you use search terms, PC is a must for large document collections. You’ve agreed to the search terms, you’ve culled the population based on those terms, how you go about reviewing documents from that point forward is no one’s business but your own. Predictive coding can make it easy for you to improve your recall (finding all Responsive documents in a set) and isn’t that the goal?

- From your search results set, seed a few known Responsive documents or pick a few KEY search terms, review those and then use a CAL system to help you find the next documents you should review (the next most Responsive documents). The system will learn from your validated (read=QC’d) decisions and every time you ask the system “what’s next?” the system will provision more likely Responsive documents until there just aren’t anymore documents that are likely Responsive (usually ~<50% similarity to your known Responsive decisions). At that point, you can either sample or search in your Likeley Not Responsive pool of documents or send all Likely Not Responsive to a lower cost resource somewhere (onshore, offshore, law schools, paralegals, etc.).

The process for document review has not changed in about 50 years and its time that we stop reviewing everything and start reviewing smarter. Let’s look at the documents that are important and not waste time (or money) reviewing all of the likely Not Responsive. Think of TAR as your super Reviewer – she’s read every single document and based on your decisions, she can predict what else you’re interested in. This way everyone wins – requesting parties get what they’re looking for and not a lot of garbage and producing parties don’t spend countless dollars reading through documents that were false positive hits on search terms or are just not really Responsive.

TAR should be an oft-used and integral part of any discovery process because it is only there to help us review better, understand our data better and get to the point faster. Not using TAR is a fool’s errand and as Mr. T says, “I pity da fool!”